Privacy and Security

- Type: seminar

- Chair: KIT-Fakultäten - KIT-Fakultät für Informatik - KASTEL – Institut für Informationssicherheit und Verlässlichkeit - KASTEL Strufe

- Semester: winter of 2022/2023

-

Lecturer:

Prof. Dr. Thorsten Strufe

Dr. Christiane Kuhn

Marcel Tiepelt

Patricia Guerra-Balboa - SWS: 2

- Lv-No.: 2400118

-

Information:

Submission link: https://easychair.org/conferences/?conf=ps2223

| Content |

The seminar covers current topics in the research area of technical data protection. These include, e.g.:

|

| Language | English |

If you are enrolled in our Ilias course, we will make sure that you can participate by offering you a topic. If you're only on the waiting list for our Ilias course, feel free to still join our introduction and send your topic preferences. We will give you a topic if possible.

Important Dates:

- 25.10.2022, 14:00 - 15:30, 50.34 Room 252 Introduction (Organization & Topics)

- 1.11.2022 Topic preferences due

- 4.11.2022 Topic assignment

- 8.11.2022 Basic Skills

- 22.1.2023 Paper submission deadline

- 29.1.2023 Reviews due

- 5.2.2023 Revision deadline

- 9.+10.3.2023 Presentations

Topics

#1 Correlation framework in DP

Supervisor: Patricia Guerra-Balboa

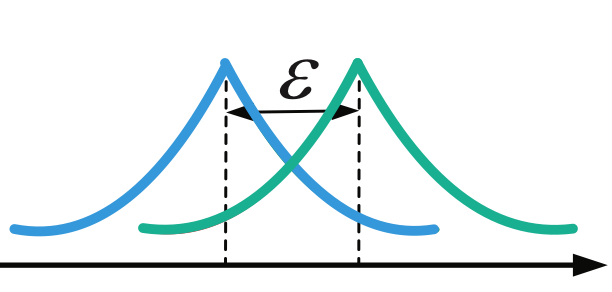

Differential Privacy (DP) has become the formal and de facto mathematical standard for privacy-preserving data release. However, recently several articles demonstrated various shortcomings of this

notion. Strong correlation in data is one example. DP inherently assumes the database is a simple, independent random sample. This implies that the records of the database are uniformly distributed (i.e.,

follow the same probability distribution) and independent (in particular, non-correlated). Unfortunately, this is not the case for several data and use cases, such as trajectory data.

The goal of this project is first to understand and formalize proofs of why DP fails in the correlation framework. Second, to explore the new adaptations of DP to deal with correlation, particularly Bayesian

DP, analyzing the privacy protection offered by this notion and the mechanisms to achieve it. And finally, test the utility provided in different use cases.

- Advanced goal: if the time and motivation of the student allow it, we could go further deep into particular and more complex correlation cases, such as time-series auto-correlation.

- Topics: Privacy Foundations; Differential Privacy; Correlation; Privacy Analysis

- Background references: Basic knowledge about DP Dwork, Roth, et al. (2014); Kasiviswanathan and Smith (2014) and statistic analysis Panaretos (2016); Sylvan (2013).

- References: Wang, Xu, Jia, Xia, and Zhang (2021); Zhang, Zhu, Liu, and Zhou (2022); Yang, Sato, and Nakagawa (2015).

- Advanced References: Chakrabarti, Gao, Saraf, Schoenebeck, and Yu (2022); Cao, Yoshikawa, Xiao, and Xiong (2018); Ra ei, Elkoumy, and van der Aalst (2022).

#2 Topology of privacy

Supervisor: Patricia Guerra-Balboa

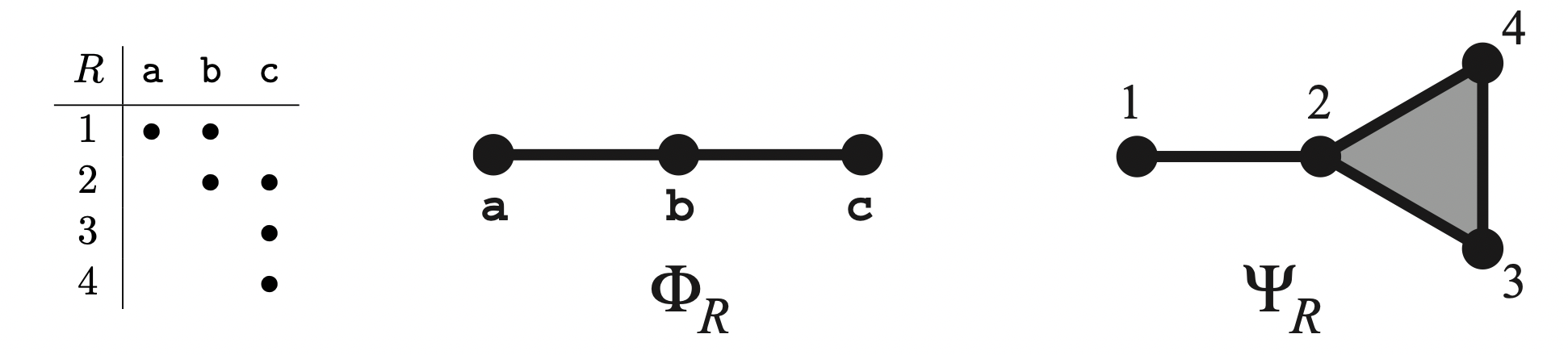

Information has intrinsic geometric and topological structure, arising from relative relationships beyond absolute values or types. For instance, the fact that two people did or did not share a meal describes

a relationship independent of the meal's ingredients. Multiple such relationships give rise to relations and their lattices. Lattices have topology. That topology informs the ways in which information may be

observed, hidden, inferred, and dissembled. Privacy preservation may be understood as finding isotropic topologies, in which relations appear homogeneous. Moreover, the underlying lattice structure of those topologies has a temporal aspect, which reveals how isotropy may contract over time, thereby puncturing privacy. The goal of this project is to understand privacy from the topological perspective using Dowker complexes.

- Advanced goal: Once we understand how to measure privacy preservation and loss using topology we could go further and overview the topology-based privacy enhancing techniques.

- Topics: Topology; Data structure; Privacy of relations

- References: Erdmann (2017)

References

- Cao, Y., Yoshikawa, M., Xiao, Y., & Xiong, L. (2018). Quantifying di erential privacy in continuous data release under temporal correlations. IEEE transactions on knowledge and data engineering, 31 (7), 1281-1295.

- Chakrabarti, D., Gao, J., Saraf, A., Schoenebeck, G., & Yu, F.-Y. (2022). Optimal local bayesian differential privacy over markov chains. In Aamas (pp. 1563-1565).

- Dwork, C., Roth, A., et al. (2014). The algorithmic foundations of di erential privacy. Foundations and Trends® in Theoretical Computer Science, 9 (3-4), 211-407.

- Erdmann, M. (2017). Topology of privacy: Lattice structures and information bubbles for inference and obfuscation. arXiv preprint arXiv:1712.04130.

- Kasiviswanathan, S. P., & Smith, A. (2014). On the'semantics' of di erential privacy: A bayesian formulation. Journal of Privacy and Con dentiality, 6 (1).

- Panaretos, V. M. (2016). Statistics for mathematicians. Compact Textbook in Mathematics. Birkhauser/Springer, 142, 9-15.

- Ra ei, M., Elkoumy, G., & van der Aalst, W. M. (2022). Quantifying temporal privacy leakage in continuous event data publishing. arXiv preprint arXiv:2208.01886.

- Sylvan, D. (2013). Introduction to mathematical statistics: by rv hogg, j. mckean, and at craig, boston, ma: Pearson, 2012, isbn 978-0-321-795434, x+ 694 pp., 110.67. Taylor & Francis Group. Retrieved from https://minerva.it.manchester.ac.uk/~saralees/statbook2.pdf

- Wang, H., Xu, Z., Jia, S., Xia, Y., & Zhang, X. (2021). Why current di erential privacy schemes are inapplicable for correlated data publishing? World Wide Web, 24 (1), 1-23.

- Yang, B., Sato, I., & Nakagawa, H. (2015). Bayesian di erential privacy on correlated data. In Proceedings of the 2015 acm sigmod international conference on management of data (pp. 747-762).

- Zhang, T., Zhu, T., Liu, R., & Zhou, W. (2022). Correlated data in di erential privacy: de nition and analysis. Concurrency and Computation: Practice and Experience, 34 (16), e6015.

#3 Inference Attacks on Machine Learning Models using Auxiliary Knowledge

Supervisor: Felix Morsbach

The use of machine learning (ML) models has become ubiquitous in many fields. However, training ML models on sensitive data can entail a privacy risk as information about the training data often can be inferred from the trained model [1]. Other privacy attacks often utilize auxiliary data, for example the de-anonymization of the Netflix Prize dataset [2]. However, the most prominent attacks on ML models don’t incorporate such auxiliary data for their attacks [1, 3]. The aim of this topic is to survey the existing literature on inferences attacks on ML models and analyze their (potential) use of auxiliary knowledge (e. g., the results or search history of a hyperparameter search). Based on these results, promising avenues for inference attacks on ML models that utilize auxiliary knowledge could be proposed.

[1] Shokri, R., M. Stronati, C. Song, and V. Shmatikov. “Membership Inference Attacks Against Machine Learning Models.” In 2017 IEEE Symposium on Security and Privacy (SP), 3–18, 2017.

https://doi.org/10.1109/SP.2017.41.

[2] Narayanan, A., and V. Shmatikov. “Robust De-Anonymization of Large Sparse Datasets.” In 2008 IEEE Symposium on Security and Privacy (Sp 2008), 111–25, 2008. https://doi.org/10.1109/SP.2008.33.

[3] Yeom, Samuel, Irene Giacomelli, Matt Fredrikson, and Somesh Jha. “Privacy Risk in Machine Learning: Analyzing the Connection to Overfitting.” In 2018 IEEE 31st Computer Security Foundations Symposium (CSF), 268–82, 2018. https://doi.org/10.1109/CSF.2018.00027.

#4 A relation between syntactic and semantic privacy notions

Supervisor: Alex Miranda Pascual

There exist two well-known families of privacy notions: syntactic and semantic. Syntactic notions specify conditions an anonymized database should exhibit; while semantic describe guarantees that the anonymization mechanisms should satisfy. Although the difference between these is apparent, there exists a relation [1] between two important representatives of each: t-closeness (syntactic) and differential privacy (semantic). The goal of this seminar topic is to explore these two families by specifically understanding how we can ensure t-closeness from differential privacy and vice versa, and the implications this relation might entail.

[1] J. Domingo-Ferrer, J. Soria-Comas (2015) "From t-closeness to differential privacy and vice versa in data anonymization". https://arxiv.org/abs/1512.05110

[2] J. Domingo-Ferrer, J. Soria-Comas (2018). "Connecting randomized response, post-randomization, differential privacy and t-closeness via deniability and permutation". arXiv preprint arXiv:1803.02139.

The papers introducing differential privacy and t-closeness are:

[3] C. Dwork, F. McSherry, K. Nissim, A. Smith (2006). Calibrating Noise to Sensitivity in Private Data Analysis. In: Halevi, S., Rabin, T. (eds) Theory of Cryptography. TCC 2006. Lecture Notes in Computer Science, vol 3876. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11681878_14

[4] N. Li, T. Li and S. Venkatasubramanian, "t-Closeness: Privacy Beyond k-Anonymity and l-Diversity," 2007 IEEE 23rd International Conference on Data Engineering, 2007, pp. 106-115, doi: 10.1109/ICDE.2007.367856.

#5 Measuring similarity of trajectories using learning methods

Supervisor: Alex Miranda Pascual

Nowadays, due to the expansion of geo-tracking devices, we have an unprecedented amount of human and vehicle trajectories. The study areas of these trajectories are very diverse, but here we are interested in studying a common problem: How we can measure the similarity of two trajectories. Similarity measures play an important part in trajectory anonymization, where they can be used as an evaluation metric of the utility of the mechanisms or as cost functions in the actual anonymization process. Many similarity measures exist, but in this seminar topic, we are interested in exploring similarity learning. The goal of this area of machine learning is to create a similarity measure that tells us how similar two trajectories are. In conclusion, we are interested in exploring and surveying these recent proposals, and understanding what are the advantages and disadvantages of these kinds of similarity measures.

Possible initial references include:

[1] Z. Fang, Y. Du, X. Zhu, L. Chen, Y. Gao, C.S. Jensen. (2021) ST2Vec: Spatio-Temporal Trajectory Similarity Learning in Road Networks. ArXiv Preprint

[2] D. Yao, G. Cong, C. Zhang, and J. Bi. Computing trajectory similarity in linear time: A generic seed-guided neural metric learning approach. In Proc. IEEE International Conference on Data Engineering, pages 1358–1369, 2019.

[3] H. Zhang, X. Zhang, Q. Jiang, B. Zheng, Z. Sun, W. Sun, and C. Wang. Trajectory similarity learning with auxiliary supervision and optimal matching. In Proc. International Joint Conference on Artificial Intelligence, pages 3209–3215, 2020.

[4] P. Yang, H. Wang, Y. Zhang, L. Qin, W. Zhang, and X. Lin. T3S: effective representation learning for trajectory similarity computation. In Proc. IEEE International Conference on Data Engineering, pages 2183–2188, 2021.

[5] P. Han, J. Wang, Di. Yao, S. Shang, and X. Zhang. A graph-based approach for trajectory similarity computation in spatial networks. In Proc. ACM Knowledge Discovery and Data Mining, pages 556–564, 2021.

#6 How to adapt trajectory data into road networks

Supervisor: Alex Miranda Pascual

Trajectory data drawn by users and road vehicles are usually represented as a sequence of spatio-temporal points. In this seminar topic, we are interested in discovering how we can use these points to reconstruct city road networks. We aim to explore the current methodologies, discover possible privacy challenges and difficulties of such processes, and examine their uses in trajectory-data anonymization.

[1] D. Li, J. Li and J. Li. Road Network Extraction from Low-Frequency Trajectories Based on a Road Structure-Aware Filter. In Proc. ISPRS International Journal of Geo-Information, number 374, 2019.

[2] T. Wang, D. Zhang, X. Zhou, X. Qi, H. Ni, H. Wang, and G. Zhou. Mining Personal Frequent Routes via Road Corner Detection. In Proc. IEEE Transactions on Systems, Man, and Cybernetics: Systems, pages 445-458, 2016

[3] S. He, F. Bastani, S. Abbar, M. Alizadeh, H. Balakrishnan, S. Chawla and S. Madden. RoadRunner: Improving the Precision of Road Network Inference from GPS Trajectories. In Proc. ACM SIGSPATIAL

International Conference on Advances in Geographic Information Systems. 2018

#7 Anonymous Key Exchange

Supervisors: Christoph Coijanovic + Marcel Tiepelt

Key exchange is an integral part of encrypted communication.

If the communication should further be anonymous, the key exchange also has to receive additional protection.

Otherwise, an adversary may, for example, be able to link communication partners through their key exchange messages, even if the communication itself is fully anonymized.

In recent years, anonymous key exchange protocols for multiple settings have been proposed, e.g.,

- Instant messaging [1]

- Smart Grids [2]

- Vehicular Networks [3,4]

- Quantum Networks [5]

Your task is to survey existing approaches for anonymous key exchange.

Each approach should be analyzed and categorized based on its notion of anonymity, adversary model, provided functionality, etc.

[1] Dowling, Benjamin, Eduard Hauck, Doreen Riepel and Paul Rösler. “Strongly Anonymous Ratcheted Key Exchange.” (2022).

[2] Srinivas, Jangirala, Ashok Kumar Das, Xiong Li, Muhammad Khurram Khan, and Minho Jo. “Designing Anonymous Signature-Based Authenticated Key Exchange Scheme for Internet of Things-Enabled Smart Grid Systems.” IEEE Transactions on Industrial Informatics 17 (2021).

[3] AlMarshoud, Mishri Saleh, Ali H. Al-Bayatti and Mehmet Sabir Kiraz. “Location Privacy in VANETs: Provably secure anonymous key exchange protocol based on self-blindable signatures.” Veh. Commun. 36 (2022).

[4] Vijayakumar, Pandi, Maria Azees, Sergei A. Kozlov and Joel José P. C. Rodrigues. “An Anonymous Batch Authentication and Key Exchange Protocols for 6G Enabled VANETs.” IEEE Transactions on Intelligent Transportation Systems 23 (2022).

[5] Ruckle, Lukas, Jakob Budde, Jarn de Jong, Frederik Hahn, Anna Pappa and Stefanie Barz. “Experimental anonymous conference key agreement using linear cluster states.” (2022).

#8 A survey on brainwaves computer interface (BCI)

Supervisor: Matin Fallahi

By developing consumer-grade brainwave devices, BCI is going to have a lot of practical applications. In the first step, we want to have an overview of the BCI field and then focus on details of data collection protocols for different applications in the BCI field.

[1]Ramzan M, Dawn S. A survey of brainwaves using electroencephalography (EEG) to develop robust brain-computer interfaces (BCIs): Processing techniques and algorithms. In2019 9th International Conference on Cloud Computing, Data Science & Engineering (Confluence) 2019 Jan 10 (pp. 642-647). IEEE.

[2]Vasiljevic GA, de Miranda LC. Brain–computer interface games based on consumer-grade EEG Devices: A systematic literature review. International Journal of Human–Computer Interaction. 2020 Jan 20;36(2):105-42.

#9 Privacy-preserving biometric authentication(PPBA): third-party approach

Supervisor: Matin Fallahi

There are a number of protocols offering privacy-preserving biometric authentication. As well as an overview of state of the art, we'll look at approaches that take advantage of third parties. This survey will focus on homomorphic encryption (HE) based solutions and feature extraction methods associated with them. (PPBA -> third-party -> HE)

[1] Rui Z, Yan Z. A survey on biometric authentication: Toward secure and privacy-preserving identification. IEEE access. 2018 Dec 27;7:5994-6009.

[2] Morampudi MK, Prasad MV, Raju US. Privacy-preserving iris authentication using fully homomorphic encryption. Multimedia Tools and Applications. 2020 Jul;79(27):19215-37.

[3] Chun H, Elmehdwi Y, Li F, Bhattacharya P, Jiang W. Outsourceable two-party privacy-preserving biometric authentication. InProceedings of the 9th ACM symposium on Information, computer and communications security 2014 Jun 4 (pp. 401-412).

[4] Privacy-Preserving Biometric Authentication (PhD thesis): https://doi.org/10.26190/unsworks/24028.

#10 Explainable machine learning for brainwaves

Supervisor: Matin Fallahi

The term explainability in machine learning refers to being able to explain what happens in the model from input to output. We are looking for papers that offer explainable machine learning on electroencephalograms (EEGs). The purpose of this seminar work is to research, categorize, and analyze works dealing with explainable machine learning on EEG signals.

[1]Ieracitano C, Mammone N, Hussain A, Morabito FC. A novel explainable machine learning approach for EEG-based brain-computer interface systems. Neural Computing and Applications. 2022 Jul;34(14):11347-60.

[2]Svetlakov M, Kovalev I, Konev A, Kostyuchenko E, Mitsel A. Representation Learning for EEG-Based Biometrics Using Hilbert–Huang Transform. Computers. 2022 Mar 20;11(3):47.

[3]Al Hammadi AY, Yeun CY, Damiani E, Yoo PD, Hu J, Yeun HK, Yim MS. Explainable artificial intelligence to evaluate industrial internal security using EEG signals in IoT framework. Ad Hoc Networks. 2021 Dec 1;123:102641.

#11 A survey on privacy of ubiquitous EMR receivers

Supervisor: Julian Todt

Nowadays, devices that measure electro-magnetic radiation (EMR) are ubiquitous in everyday life (antennas, cameras, etc.). While it is common knowledge that visible light cameras can be used to identify individuals, there has also been increasing research in whether other EMR receivers can be used for privacy inferences. The goal of this seminar topic is to survey existing work analyzing the privacy impacts of common EMR receivers. Technologies to consider include but are not limited to thermal imaging [0], depth imaging (LiDAR) [1], x-rays, MRIs [2], WiFi (+localization) [3] and 5G (+beamforming).

[0] Anghelone, David, Cunjian Chen, Arun Ross, and Antitza Dantcheva. “Beyond the Visible: A Survey on Cross-Spectral Face Recognition.” arXiv, May 5, 2022. http://arxiv.org/abs/2201.04435.

[1] A. Wu, W. -S. Zheng and J. -H. Lai, "Robust Depth-Based Person Re-Identification," in IEEE Transactions on Image Processing, vol. 26, no. 6, pp. 2588-2603, June 2017, doi: 10.1109/TIP.2017.2675201.

[2] Schwarz CG, Kremers WK, Therneau TM, et al. Identification of Anonymous MRI Research Participants with Face-Recognition Software. The New England Journal of Medicine. 2019 Oct;381(17):1684-1686. DOI: 10.1056/nejmc1908881. PMID: 31644852; PMCID: PMC7091256.

[3] Y. Zeng, P. H. Pathak and P. Mohapatra, "WiWho: WiFi-Based Person Identification in Smart Spaces," 2016 15th ACM/IEEE International Conference on Information Processing in Sensor Networks (IPSN), 2016, pp. 1-12, doi: 10.1109/IPSN.2016.7460727.

#12 A survey on video anonymization

Supervisor: Julian Todt

There has been significant research on anonymization methods for images, particularly on modifying faces so that identification of individuals is not possible anymore. As more and more videos of individuals get collected everyday, we consider the additional privacy and utility requirements of video data over images. The goal of this seminar topic is to survey anonymization methods that have been designed for videos.

[0] Meden, Blaz, Peter Rot, Philipp Terhorst, Naser Damer, Arjan Kuijper, Walter J. Scheirer, Arun Ross, Peter Peer, and Vitomir Struc. “Privacy–Enhancing Face Biometrics: A Comprehensive Survey.” IEEE Transactions on Information Forensics and Security 16 (2021): 4147–83. https://doi.org/10.1109/TIFS.2021.3096024.

[1] Wang, Han, Shangyu Xie, and Yuan Hong. “VideoDP: A Universal Platform for Video Analytics with Differential Privacy.” arXiv, September 20, 2019. http://arxiv.org/abs/1909.08729.

[2] Wen, Yunqian, Bo Liu, Rong Xie, Jingyi Cao, and Li Song. "Deep Motion Flow Aided Face Video De-identification." In 2021 International Conference on Visual Communications and Image Processing (VCIP), pp. 1-5. IEEE, 2021.

#13 Modelling and Synthesizing Human Motion

Supervisor: Simon Hanisch

Human motion is a complex task which influenced by a wide range of paramters such as physiology, fitnes, or methabolism. Motions like walking (gait) are biometric factors which can be used to identify people and to infer private attributes like gender. Hence, anonymization methods are required to protect peoples privacy. However, due to the complex interdependencies which influence human motions this is a challenging task, as they can be used to reconstruct to original motion. To overcome this problem human motion modelling and synthesizing is required. The goal of this seminar is to perform a high level survey of which methods can be used to model & synthesize human motions and how the different methods compare to each other.

- Human motion modelling and recognition: A computational approach, Bruno et al.

- Predictive Gait Simulations of Human Energy Optimization, Koelewijn et al.

- Formation and Control of Optimal Trajectory in Human Multijoint Arm Movement, Uno et al.

- A geometry- and muscle-based control architecture for synthesising biological movement, Walter et al.

#14 Survey on 5G Security and beyond

Supervisor: Kamyar Abedi

As cellular communication has grown since its establishment it now moves into the sixth generation (6G). 5G network already proposed various new services and use cases leveraging advanced technologies including but not limited to the Internet of Things (IoT), massive MIMO, Device to Device communication (D2D), Vehicle to Everything (V2X) communication, and VR/AR applications. It integrates enabling technologies such as Edge computing, Network Function Virtualization (NFV), and Software Defined Networks (SDN) to support a broad range of use cases and application scenarios. Significant security and privacy challenges have arisen within these advanced technologies that are required to be addressed in next-generation(6G) cellular network. To enhance subscriber privacy and security in 6G networks, this seminar work aims to conduct a survey and literature research on 5G Radio Access Networks (RAN) to discover any vulnerabilities that affect subscriber privacy and security domain in 5G Radio Access Network, the challenge may extend into the 5G Core.

[1] D. Rupprecht, K. Kohls, T. Holz, and C. Pöpper, “Breaking LTE on Layer Two,” in IEEE Symposium on Security & Privacy (SP). IEEE, 2019.

[2] A. Shaik, R. Borgaonkar, N. Asokan, V. Niemi, and J.-P. Seifert, “Practical Attacks Against Privacy and Availability in 4G/LTE Mobile Communication Systems,” in Symposium on Network and Distributed System Security (NDSS). ISOC, 2016.

[3] S. R. Hussain, O. Chowdhury, S. Mehnaz, and E. Bertino, “LTEInspector: A Systematic Approach for Adversarial Testing of 4G LTE,” in Symposium on Network and Distributed System Security (NDSS). ISOC, 2018.

[4] A. Shaik, R. Borgaonkar, S. Park, and J.-P. Seifert, “New Vulnerabilities in 4G and 5G Cellular Access Network protocols : Exposing Device Capabilities,” in Conference on Security & Privacy in Wireless and Mobile Networks (WiSec). ACM, 2019.

[5]S. R. Hussain, M. Echeverria, O. Chowdhury, N. Li, and E. Bertino, “Privacy Attacks to the 4G and 5G Cellular Paging Protocols Using Side Channel Information,” in Symposium on Network and Distributed System Security (NDSS). ISOC, 2019.